How Video Game Graphics Technology Works

The Evolution of Video Game Graphics Technology

Imagine stepping into a sprawling digital world where sunlight filters through ancient trees and every raindrop on a character's armor feels impossibly real. This immersive experience is made possible by the rapid evolution of video game graphics technology, a complex mix of hardware power and clever software artistry. It transforms raw lines of code into breathtaking vistas and intense action sequences that define modern gaming.

At its core, this technology acts as a bridge between the mathematical instructions of a game engine and the visual display you see on your monitor. It requires a constant, high-speed conversation between software that creates the scene and hardware that draws it pixel by pixel. Understanding this process unveils the ingenuity required to build digital worlds that look, feel, and behave in believable ways.

The Building Blocks of Video Game Graphics Technology

Every object you encounter in a game begins as a collection of simple geometric shapes, most commonly triangles. These triangles are arranged into intricate wireframe structures known as meshes, which serve as the foundation for everything from a player character to a distant mountain range. These shapes are defined by vertices, which are specific points in three-dimensional space connected to form the final object.

Once the basic shape is formed, it must be filled in to look like a solid object rather than a skeletal framework. This is where the game engine applies textures, which act like skins wrapped around the geometric mesh. These textures provide the color, detail, and surface characteristics that allow a blocky shape to transform into a wooden crate or a stone wall.

The Powerhouse Behind the Scenes

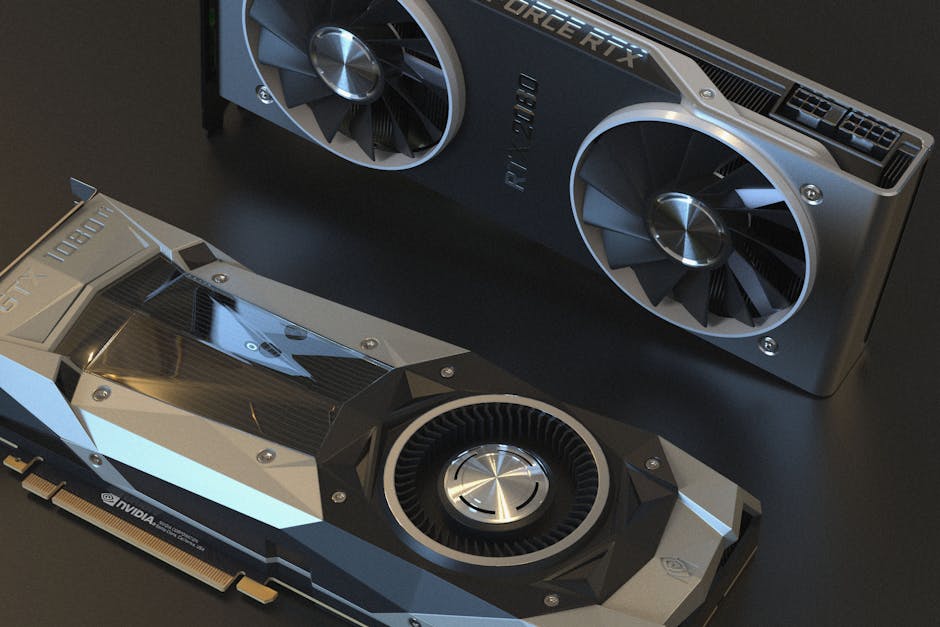

The Graphics Processing Unit, or GPU, serves as the engine that drives these complex calculations at blistering speeds. Unlike a standard processor, which excels at handling a few tasks very quickly, the GPU is designed for massive parallel processing. It manages thousands of tiny, simultaneous calculations needed to render the millions of pixels on your screen every single frame.

When you move your camera in a game, the GPU must recalculate the position of every visible object, adjust lighting based on new angles, and re-draw the entire screen dozens of times per second. This constant, high-speed operation is what makes modern games feel smooth and responsive. Without this specialized hardware capability, the high-fidelity environments we take for granted would be impossible to achieve in real-time.

How Lighting Defines the Scene

Lighting is perhaps the most critical factor in achieving visual realism, as it dictates how we perceive depth, mood, and material quality. Older games relied on pre-baked lighting, where shadows and highlights were effectively painted onto textures before the game was even played. This technique was efficient but lacked the dynamic responsiveness required for changing environments.

Modern engines use complex, real-time light simulation to ensure every shadow and reflection reacts to the player's movements. This technology handles numerous aspects of light interaction to create a cohesive scene:

- Diffuse lighting determines how light scatters when it hits a matte surface.

- Specular highlights create the glossy, reflective look found on metal or water.

- Ambient occlusion adds soft shadows in crevices to ground objects in their environment.

- Global illumination manages how light bounces from surface to surface to fill a room naturally.

By balancing these light interactions, developers create scenes that feel grounded rather than flat. This dynamic approach ensures that a candle in a dark room casts moving shadows as it flickers, significantly boosting the player's immersion.

Shaders: The Hidden Artists

Shaders are small, specialized programs that run on the GPU and tell it exactly how to render pixels, vertices, and light. Think of them as the digital artists working in real-time to define the final look of every surface on your screen. They perform the heavy lifting required to make glass look transparent, water appear reflective, or skin look slightly translucent.

Vertex shaders manipulate the geometry of objects, allowing for animations like swaying trees or character movement. Conversely, fragment shaders operate at the pixel level to determine color, depth, and the impact of lighting effects on that specific point. By coordinating these two types of programs, developers can achieve incredibly varied visual styles, from hyper-realistic textures to stylized, artistic rendering.

Mastering Texture Mapping and Detail

While geometry defines the shape, textures provide the surface detail that convinces our eyes of an object's material properties. However, basic images are rarely enough on their own to create convincing realism, which is why developers use advanced mapping techniques. These tools allow a flat image to interact with lighting and geometry to create the illusion of depth and texture.

Normal mapping is a standout technique here, using special images to simulate bumps, cracks, and surface imperfections without adding extra geometric complexity. This allows a flat wall texture to appear as though it has deep, rugged brickwork, saving massive amounts of processing power. When combined with parallax mapping, these surfaces appear to have genuine depth as the player moves around them.

The Ray Tracing Revolution

Ray tracing represents a massive leap forward by simulating the actual physics of light rather than relying on mathematical approximations. Instead of guessing how light should behave, the engine calculates the path of individual light rays as they bounce off surfaces and interact with the environment. This technique produces incredibly accurate reflections, shadows, and lighting that were previously impossible to achieve in real-time.

While demanding on hardware, ray tracing solves the long-standing challenge of creating realistic, dynamic reflections on complex surfaces like glass, polished metal, or wet pavement. It allows light to behave exactly as it does in the physical world, bringing a new level of coherence to digital environments. As hardware continues to advance, this technology is becoming the new standard for visual fidelity.

Looking Toward the Future

The next frontier for interactive visuals involves leveraging artificial intelligence to enhance performance and detail. Techniques like AI-driven upscaling allow games to render at a lower resolution and use deep learning to reconstruct the image into a sharp, high-resolution final product. This dramatically increases frame rates without sacrificing visual quality, providing a smoother experience on a wider range of hardware.

Furthermore, procedural generation is becoming increasingly sophisticated, allowing engines to create massive, detailed worlds without requiring designers to manually place every single asset. Combined with advances in lighting and geometry, these tools ensure that gaming environments will continue to become more sprawling, intricate, and visually stunning. The gap between digital creation and physical reality continues to shrink, promising even more immersive adventures.